but I don't see stutters only get a headacke after a while

Some 3dfx Fun

- Gold Leader

- DBB Ace

- Posts: 247

- Joined: Tue Jan 17, 2006 6:39 pm

- Location: Guatamala, Tatooine, Yavin IV

- Contact:

-

MD-2389

- Defender of the Night

- Posts: 13477

- Joined: Thu Nov 05, 1998 12:01 pm

- Location: Olathe, KS

- Contact:

Umm....if you're toting image quality, then you should really look at those two images you posted again. Grendel's 1280 shot looks a hell of alot better than your 6000 shot. Grendel's shot actually has AA enabled, and the colors are a HELL of alot more vibrant. You're shooting yourself in the foot with that arguement dude.

"One spelling mistake can destroy your life. A Husband sent this to his wife : "I'm having a wonderful time. Wish you were her." - @RobinWilliams

-

MD-2389

- Defender of the Night

- Posts: 13477

- Joined: Thu Nov 05, 1998 12:01 pm

- Location: Olathe, KS

- Contact:

Re:

Nope, maybe YOU should look again.Xamindar wrote:Umm MD, take another look. I think you are getting them mixed up.

Grendel's 6800U shot:

Obi's 3dfx 6000 shot

click me

Take a good look, and then come back.

Oh Obi, it would also help if you post shots WITH the fps counter enabled.

"One spelling mistake can destroy your life. A Husband sent this to his wife : "I'm having a wonderful time. Wish you were her." - @RobinWilliams

People need to pick a gamma level, and use uncompressed files with the same resolutions

then we can do proper comparisons, to me Obi-clone's pictures look more washed out, but Grendel's is too dark, because of the above.

beyond that, the banding in the sky on grendel's shot is obivous.

the fps counter has nothing to do with image quality, and AA comparisons here are pointless - there isn't enough polys for it to be worthwhile.

then we can do proper comparisons, to me Obi-clone's pictures look more washed out, but Grendel's is too dark, because of the above.

beyond that, the banding in the sky on grendel's shot is obivous.

the fps counter has nothing to do with image quality, and AA comparisons here are pointless - there isn't enough polys for it to be worthwhile.

-

MD-2389

- Defender of the Night

- Posts: 13477

- Joined: Thu Nov 05, 1998 12:01 pm

- Location: Olathe, KS

- Contact:

Re:

Right, I was referring back to one of his posts when he mentioned how many frames he got. This would further back up his arguements as supporting evidence. Probably should've made that a little clearer.fliptw wrote:the fps counter has nothing to do with image quality

"One spelling mistake can destroy your life. A Husband sent this to his wife : "I'm having a wonderful time. Wish you were her." - @RobinWilliams

- Gold Leader

- DBB Ace

- Posts: 247

- Joined: Tue Jan 17, 2006 6:39 pm

- Location: Guatamala, Tatooine, Yavin IV

- Contact:

Re:

Info removed access denied

This was also the talk among my friends and I back in the days of the voodoo. voodoo, or glide always looked better than nvidia and opengl. And back then D3D was a joke. We never knew why, just that we saw it and review sites also noted it as well.

Kind of surprizing to see it still the same. Though I bet if you compared a screenshot of Halflife2 or any other more recent game it would be the other way around.

Kind of surprizing to see it still the same. Though I bet if you compared a screenshot of Halflife2 or any other more recent game it would be the other way around.

- Gold Leader

- DBB Ace

- Posts: 247

- Joined: Tue Jan 17, 2006 6:39 pm

- Location: Guatamala, Tatooine, Yavin IV

- Contact:

Re:

sorry but I felt like removing this

- Krom

- DBB Database Master

- Posts: 16058

- Joined: Sun Nov 29, 1998 3:01 am

- Location: Camping the energy center. BTW, did you know you can have up to 100 characters in this location box?

- Contact:

/me owns a Voodoo3 3000 AGP 16MB card and a Diamond Monster 3D II 8 MB PCI card. I've seen 3dfx and glide plenty in action, aside from the sky, everything else looks better on a geforce.

You can't even compare a voodoo5 to a geforce in HL2 or any other recent game, since the voodoo5 can't run HL2 or any other recent game either for that matter. So that is a totally unfair comparison. The voodoo5 is a fixed function graphics accelerator, it is not programmable like everything from the GF3 and up has been. The voodoo5 would have been a good product, if it had come to market 2 years sooner. It was already out of the race by the time it reached the market.

And only the sky is the only thing that looks crappy, plus the sky still looks just as crappy on your favorite ATI hardware, thats the advantage of D3 being programmed for glide, unless it is updated OpenGL/D3D will never match the image quality you get in Glide. D3 is the exception out of most games from its day, by the time D3 game out most developers were moving away from glide support.

You can't even compare a voodoo5 to a geforce in HL2 or any other recent game, since the voodoo5 can't run HL2 or any other recent game either for that matter. So that is a totally unfair comparison. The voodoo5 is a fixed function graphics accelerator, it is not programmable like everything from the GF3 and up has been. The voodoo5 would have been a good product, if it had come to market 2 years sooner. It was already out of the race by the time it reached the market.

And only the sky is the only thing that looks crappy, plus the sky still looks just as crappy on your favorite ATI hardware, thats the advantage of D3 being programmed for glide, unless it is updated OpenGL/D3D will never match the image quality you get in Glide. D3 is the exception out of most games from its day, by the time D3 game out most developers were moving away from glide support.

- Gold Leader

- DBB Ace

- Posts: 247

- Joined: Tue Jan 17, 2006 6:39 pm

- Location: Guatamala, Tatooine, Yavin IV

- Contact:

Re:

ever played Descent3 with T&L disabled on a 6800ULTTA then it's a fair play rightKrom wrote:/me owns a Voodoo3 3000 AGP 16MB card and a Diamond Monster 3D II 8 MB PCI card. I've seen 3dfx and glide plenty in action, aside from the sky, everything else looks better on a geforce.

You can't even compare a voodoo5 to a geforce in HL2 or any other recent game, since the voodoo5 can't run HL2 or any other recent game either for that matter. So that is a totally unfair comparison. The voodoo5 is a fixed function graphics accelerator, it is not programmable like everything from the GF3 and up has been. The voodoo5 would have been a good product, if it had come to market 2 years sooner. It was already out of the race by the time it reached the market.

And only the sky is the only thing that looks crappy, plus the sky still looks just as crappy on your favorite ATI hardware, thats the advantage of D3 being programmed for glide, unless it is updated OpenGL/D3D will never match the image quality you get in Glide. D3 is the exception out of most games from its day, by the time D3 game out most developers were moving away from glide support.

have to remember that the Voodoo5 6000 is a prototype and not a production card that everyone has, there is also a Voodoo5 6000 which has 4ns SDRAM with cores/mem @ 240Mhz that card hits the 170 fps with the same driver as mine that is the max power of a Voodoo5 6000 and that would of been the chosen Production speed of that card.

3dfx Glide is actually 22 Bit colour not 16 that's why it looks better than 16 Bit D3D in many cases and also including 16 bit OpenGL and 32Bit OpenGL.

The Voodoo5 6000 isn't made for Half Life 2 though it does run fluid @ 48 AVR.frames per sec @ low details @ 1600 x 1200 x 16 with MesaFX 6.2.0.2 hehe but then HalfLife2 looks better on an ATi Radeon X1900XT or GeForce 7800GTX 512 than a Voodoo5 since that the newer cards of today are made to run games with advanced shadermodels which the 6000 does not have.

in Glide games 3dfx are simply the best, and in that shot of that 6800Ultra I still can see jaggies bad FSAA I must say my 6000 had no FSAA ... okay a higher reso but even then with 4x FSAA the GeForce6800 should let us see no jaggies at all, so still it looks bad.

anyways I have tested about 59 3dfx cards from all sorts and there is a big difference in image quallity between a Voodoo3 aka Avaenger and a Voodoo5 aka Napalm. if you really like to discuss these thing I'd say drop by and then find out thr true nature of 3dfx. I have seen it here, too much off topic things.

I posted something about 3dfx fun and not to be hindered by off topic situations.

- Gold Leader

- DBB Ace

- Posts: 247

- Joined: Tue Jan 17, 2006 6:39 pm

- Location: Guatamala, Tatooine, Yavin IV

- Contact:

- Gold Leader

- DBB Ace

- Posts: 247

- Joined: Tue Jan 17, 2006 6:39 pm

- Location: Guatamala, Tatooine, Yavin IV

- Contact:

Re:

fliptw wrote:if you can't hold a worthwhile discussion here, then we have no good reason to go to the board you've linked too - outside of the fact this forum already went thru this 6 years ago, when all of this was germaine.

why no one has posted a 32-bit d3d screenshot is beyond me.

Well I post something then it goes offtrack which isn't the idea and the site I posted is one of the best 3dfx sources known around

I can make 32Bit Glide shot's too if you like it's a setting that normally was disabled but can be enabled in the driver it's self the only difference is that the colours are alittle darker no great difference though

By holding a good communication that isn't the problem but when things go offtopic where's the good communication gone to?

What happened in the past is something I can't change for you but sorry to hear that. At FalconFly.de, VoodooAlert.de, x-3dfx.com and 3dfxzone.it we have had our problems there also but that happens everywhere then but still it is sad to send people away.

Obi, how did you get the 3DFx cards to reconcile with Dx 8 and 9 fx? Never could get my V3 3k to run the more advanced stuff.

(btw, sry for the skim earlier. I went back through and read all the posts. (...Thanks MD!

)

)

All this 3DFX talk makes me wish that Aureal 3D was still around. I have a Vortex 1 card that put out sound that I have seen..or rather heard.. equaled (save maybe the AWE32 which I've never owned or heard)

(btw, sry for the skim earlier. I went back through and read all the posts. (...Thanks MD!

All this 3DFX talk makes me wish that Aureal 3D was still around. I have a Vortex 1 card that put out sound that I have seen..or rather heard.. equaled (save maybe the AWE32 which I've never owned or heard)

hey Obi-Wan Kenobi, how did you get the 6000? Did you buy it from that guy? How much was it? Man, I remimber seeing those years ago right when 3dfx went under and drooling for one. I adore strange technology like that.

When 3dfx closed did the engineers take all the 6000 and sell them? It's kind of strange that they got out into the public, but cool!

When 3dfx closed did the engineers take all the 6000 and sell them? It's kind of strange that they got out into the public, but cool!

-

MD-2389

- Defender of the Night

- Posts: 13477

- Joined: Thu Nov 05, 1998 12:01 pm

- Location: Olathe, KS

- Contact:

Re:

Must be your monitor then because it looks great compared to yours on mine. I even looked at the two on the laptop's widescreen LCD display and the difference between the two was like night and day.Obi-Wan Kenobi wrote:Well look at the sky it's crappy the Voodoo5 6000 still PWNs the crappyness of the nvidia card,

Maybe because Outrage did a piss poor job of implimentation of D3D? Gee, maybe thats why damn near everyone is using OpenGL instead?like I said Descent3 was made for 3dfx glide not D3D that was planned later, then tell me why were there so many fixes for Direct3D and not Glide

heh, my theory is wrong? My my my, aren't we on the defensive. Maybe you should check your own info before you start coming down on other users. D3 is built for DX6 support. If you do a little research, you'll note that D3 does not support T&L. T&L will only work in DX7 and later games.Once again if you really want to know the real truth it's time you visited http://www.falconfly-central.de/cgi-bin/yabb/YaBB.pl

there you will learn the true power of 3dfx and yes you will be confronted and yes your theorie is very wrong, I have told you, but looks like your belief is only stick'n to your self heh .

Look, I don't have any problem with someone posting about 3DFX, boasting that they can still run the hardware, or just get excited in material. My only problem is with you bashing those of us that actually use and like nVidia hardware. "nVidiots" I believe is what you called us. Thats only going to make YOU look like the fool, because instead of properly rebuking anything, you're just throwing insults. Thats generally considered bad form in any kind of a debate.

It might help if you posted screenshots WITHOUT the boosted gamma (because thats what makes your screenshot look washed out by comparison), in its original TGA format (so we won't have to deal with "that looks worse because of the compression artifacts" postings) at the same resolution as everyone else. Then we can have a true comparison.

- Gold Leader

- DBB Ace

- Posts: 247

- Joined: Tue Jan 17, 2006 6:39 pm

- Location: Guatamala, Tatooine, Yavin IV

- Contact:

Re:

well for DX 8 support the is SFFT 35 best drivers for NT systems it uses SFFT Alpha 29 for the DX8.1 coreDuper wrote:Obi, how did you get the 3DFx cards to reconcile with Dx 8 and 9 fx? Never could get my V3 3k to run the more advanced stuff.

(btw, sry for the skim earlier. I went back through and read all the posts. (...Thanks MD!

)

All this 3DFX talk makes me wish that Aureal 3D was still around. I have a Vortex 1 card that put out sound that I have seen..or rather heard.. equaled (save maybe the AWE32 which I've never owned or heard)

SFFT 35

Use Koolsmokey's Voodoo Control for the control panel:

Voodoo Control

you can make use of the SFFT 35 driver only under Win2KPro and XPHome/Pro only

http://www.traumatic.de/pphlogger/dlcou ... n9x-29.zip

And WoW you still got the Aureal Vortex! Good lord that Card rules Always wanted the Aureal Vortex 1 and 2

Well I hope the driver should bring more light to your Voodoo3, btw Voodoo3 is limited to running games like UT 2K3 because of it's 16MB ram but it should run fine in 800 x 600 mode

- Krom

- DBB Database Master

- Posts: 16058

- Joined: Sun Nov 29, 1998 3:01 am

- Location: Camping the energy center. BTW, did you know you can have up to 100 characters in this location box?

- Contact:

Re:

[dyk]Descent 3 is a Directx 6 game, thus no matter what video card you are using Transform and Lighting in the hardware is automatically disabled.[/dyk]Obi-Wan Kenobi wrote:ever played Descent3 with T&L disabled on a 6800ULTTA then it's a fair play right

Re:

Yeah, I loved them as well. It seems that all the cool companies get squashed by the punk ones. The 3D sound on those cards was simply amazing! Despite the low sound quality, they were great.Duper wrote:All this 3DFX talk makes me wish that Aureal 3D was still around. I have a Vortex 1 card that put out sound that I have seen..or rather heard.. equaled (save maybe the AWE32 which I've never owned or heard)

Re:

haha yeah, right after sueing them into oblivion over a midi chip of all things.Obi-Wan Kenobi wrote: didn't Creative buy Aureal?

-

MD-2389

- Defender of the Night

- Posts: 13477

- Joined: Thu Nov 05, 1998 12:01 pm

- Location: Olathe, KS

- Contact:

Re:

Yeah, Creative bought them out like four or five years ago. There was a big stink about that too. Almost as big as the 3dfx/nVidia one. I remember a rather large flame war on the old Volition BB about that.Obi-Wan Kenobi wrote:and WoW you still got the Aureal Vortex! Good lord that Card rules Always wanted the Aureal Vortex 1 and 2I still make use of my good ole AWE 64 Gold! hehe but that Aureal 3D is awearsome I must say good to see that those cards are still being used to bad that they aren't around, didn't Creative buy Aureal?

"One spelling mistake can destroy your life. A Husband sent this to his wife : "I'm having a wonderful time. Wish you were her." - @RobinWilliams

- Gold Leader

- DBB Ace

- Posts: 247

- Joined: Tue Jan 17, 2006 6:39 pm

- Location: Guatamala, Tatooine, Yavin IV

- Contact:

Re:

nope didn't know that since I only play the game on Voodoo cards what it was built for lol.Krom wrote:[dyk]Descent 3 is a Directx 6 game, thus no matter what video card you are using Transform and Lighting in the hardware is automatically disabled.[/dyk]Obi-Wan Kenobi wrote:ever played Descent3 with T&L disabled on a 6800ULTTA then it's a fair play right

I have seen it in action on a 6800 and the Voodoo still PWNs it in graphical detail , well that's my point of view not yours, everyone has thier choice of graphics I hope that isn't illeagal

- Krom

- DBB Database Master

- Posts: 16058

- Joined: Sun Nov 29, 1998 3:01 am

- Location: Camping the energy center. BTW, did you know you can have up to 100 characters in this location box?

- Contact:

My few years with 3Dfx cards was fantastic, the Voodoo2 and Voodoo3 were the undisputed kings of 3D at the time and I was quite happy with them. But by the time the Voodoo5 hit the market, Nvidia had already gotten bigger and better products to the market.

Since I upgraded from my old Voodoo3, I have used a Vanilla GF3, then a FX 5900 Ultra, and now a 6800 GT. My experience with Nvidia hardware and drivers has been excellent, 3dfx drivers were few and far between by the time I got around to ditching the cards.

I seem to recall talk about the voodoo rendering in 22 bit color internally, but thats actually nothing to brag about because all Nvidia cards since the TNT2 have rendered at 32 bit internally even if they were set to 16 bit color and working on 16 bit textures, ATI is the same way. 3dfx just had a better dithering pattern.

I'm really curious what graphical detail you are talking about, I just took a look around D3 and I can't really see any missing details from my old system in glide mode. The only thing that is different is the sky texture has far less blur to it then on 3dfx hardware. For everything else, thanks to 4x AA and 16x AF I have applied, jagged edges are almost completely gone, and textures are clear and sharp even at hard angles to a huge distance. I remember moving from 3dfx to Nvidia hardware and I don't remember any lack of detail from the transition. So what is the graphical detail that gets owned?

Since I upgraded from my old Voodoo3, I have used a Vanilla GF3, then a FX 5900 Ultra, and now a 6800 GT. My experience with Nvidia hardware and drivers has been excellent, 3dfx drivers were few and far between by the time I got around to ditching the cards.

I seem to recall talk about the voodoo rendering in 22 bit color internally, but thats actually nothing to brag about because all Nvidia cards since the TNT2 have rendered at 32 bit internally even if they were set to 16 bit color and working on 16 bit textures, ATI is the same way. 3dfx just had a better dithering pattern.

I'm really curious what graphical detail you are talking about, I just took a look around D3 and I can't really see any missing details from my old system in glide mode. The only thing that is different is the sky texture has far less blur to it then on 3dfx hardware. For everything else, thanks to 4x AA and 16x AF I have applied, jagged edges are almost completely gone, and textures are clear and sharp even at hard angles to a huge distance. I remember moving from 3dfx to Nvidia hardware and I don't remember any lack of detail from the transition. So what is the graphical detail that gets owned?

I recall reading the project journel of the senior coder/project head (I can't remember his name) on the Outrage site years ago. (long before \"blogs\" were vogue) and among other interesting stuff. He mentioned that coding for the 3 different vid codex was a nightmare. Descent 3 was coded with 3DFX in mine, and D3D in second, (which looked great back then but frame lagged horribly),but coding also for OGL along with the other two was Very difficult. He said that they were daimeterically apposed, and dind't really expect it to work as well as he would have liked. If you recall, about that time THE SHOWDOWN for graphic accelration was in full swing. And as Descent had several other Voodoo products \"supporting\" it. (well, more like chearing it on) doing D3 in Glide only made sense.

When my daughter leaves, I'll thow that V3 card along with the Vortex card back in her box with 98 SE and play Descent on it only.

btw. I noticed that D3 only requires Dx3 to run, but you need Dx 6.0 for for all the Direct3D \"goodies\" in the game. Dx 6.1 came with the game. (this is from the setup screen)

When my daughter leaves, I'll thow that V3 card along with the Vortex card back in her box with 98 SE and play Descent on it only.

btw. I noticed that D3 only requires Dx3 to run, but you need Dx 6.0 for for all the Direct3D \"goodies\" in the game. Dx 6.1 came with the game. (this is from the setup screen)

- Gold Leader

- DBB Ace

- Posts: 247

- Joined: Tue Jan 17, 2006 6:39 pm

- Location: Guatamala, Tatooine, Yavin IV

- Contact:

NV FSAA Sux big time to be honest

3dfx and ATi still have the best FSAA.

Did You know that my pic was without FSAA @ 1600 x 1200 x16 in Glide yours was 1280 x 1024 x 16 in Direct3D with FSAA x4 AF x16 well your FSAA is like FSAA x2 on a Voodoo5 LoL a big laugh imho.

here is a pic from the Voodoo5 5500 AGP from week 25 year 2000 and look at the time difference also

I used Reso 1024 x 768 x 16 in Glide + FSAA x4 looks nice and dark doesn't it this is also because of the Rotated Grid FSAA that is also why your pic was darker than mine that is a typical FSAA effectm FSAA minimizes the jaggered edges and it makes the image somewhat darker.

this is also because of the Rotated Grid FSAA that is also why your pic was darker than mine that is a typical FSAA effectm FSAA minimizes the jaggered edges and it makes the image somewhat darker.

you people should be happy about the 3dfx Voodoo5 because it's the first graphic accelerater that made FSAA usefull it created a by far better 2D as 3D image quallity than the GeForce2 by it's time. to compare a GeForce 6 something with a Voodoo5 is like comparing a pear and an apple lol.

But what my point is that the FSAA quallity hasn't changed in these 5.5 years of time which is seriously imbarassing. I have talked about this with other 3dfx people, ex-3dfx engineers and even they totally agreed.

Anyways For best quallity and speed for games of today I take usage of my ATi X850 XT Platinum Edition ,I know that there are nicer ATi cards like the X1800 and X1900 cards but money is my main concern the X850XT PE AGP will do fine.

the 3dfx Voodoo cards is a nice but Elusive hobby by collecting cards like that and bring them back to life is for me more fun than seeing a common Mass produced cards in action lol, nothing so special about that. anyways I have posted this topic for some nice remberings of the good ole days from 3dfx the Voodoo5 was also a best for Flight Sim people like me

here Anandtech explains why:

FSAA is where the Voodoo5 5500 really shines - it's performance is close to that of the GeForce 2 GTS, but the image quality is noticeably better, at least in our opinion. Whether you need the FSAA effects or not is entirely up to the individual gamer to decide on. If the focus of your game play is first person shooters where high frame rate is critical and there's little time to notice eye candy, then you probably won't get any real benefit from the FSAA support of the Voodoo5. On the other hand, if you're really into racing games or flight simulators where frame rate is less critical and there's more time to take in the visuals, then FSAA definitely comes in very useful.

I got that from here:

http://www.anandtech.com/showdoc.aspx?i=1276&p=24

..\" In this topic all think voodoo ...

if you aren't a voodoo user this is not the right place for you, simple and plain.\"...

AmigaMerlin

3dfx and ATi still have the best FSAA.

Did You know that my pic was without FSAA @ 1600 x 1200 x16 in Glide yours was 1280 x 1024 x 16 in Direct3D with FSAA x4 AF x16 well your FSAA is like FSAA x2 on a Voodoo5 LoL a big laugh imho.

here is a pic from the Voodoo5 5500 AGP from week 25 year 2000 and look at the time difference also

I used Reso 1024 x 768 x 16 in Glide + FSAA x4 looks nice and dark doesn't it

you people should be happy about the 3dfx Voodoo5 because it's the first graphic accelerater that made FSAA usefull it created a by far better 2D as 3D image quallity than the GeForce2 by it's time. to compare a GeForce 6 something with a Voodoo5 is like comparing a pear and an apple lol.

But what my point is that the FSAA quallity hasn't changed in these 5.5 years of time which is seriously imbarassing. I have talked about this with other 3dfx people, ex-3dfx engineers and even they totally agreed.

Anyways For best quallity and speed for games of today I take usage of my ATi X850 XT Platinum Edition ,I know that there are nicer ATi cards like the X1800 and X1900 cards but money is my main concern the X850XT PE AGP will do fine.

the 3dfx Voodoo cards is a nice but Elusive hobby by collecting cards like that and bring them back to life is for me more fun than seeing a common Mass produced cards in action lol, nothing so special about that. anyways I have posted this topic for some nice remberings of the good ole days from 3dfx the Voodoo5 was also a best for Flight Sim people like me

here Anandtech explains why:

FSAA is where the Voodoo5 5500 really shines - it's performance is close to that of the GeForce 2 GTS, but the image quality is noticeably better, at least in our opinion. Whether you need the FSAA effects or not is entirely up to the individual gamer to decide on. If the focus of your game play is first person shooters where high frame rate is critical and there's little time to notice eye candy, then you probably won't get any real benefit from the FSAA support of the Voodoo5. On the other hand, if you're really into racing games or flight simulators where frame rate is less critical and there's more time to take in the visuals, then FSAA definitely comes in very useful.

I got that from here:

http://www.anandtech.com/showdoc.aspx?i=1276&p=24

..\" In this topic all think voodoo ...

if you aren't a voodoo user this is not the right place for you, simple and plain.\"...

AmigaMerlin

After buying a Riva 128 instead of a Voodoo1 and a Riva TNT instead of a Voodoo2, I promised myself that I'd only buy 3dfx for the rest of my life because of how much the nVidia cards sucked. So instead of going TNT2, I went Voodoo3, and instead of going Geforce2, I went Voodoo5. I don't regret it for a second (except for the part where I promised myself 3dfx for life) and I used my Voodoo5's through 2002. I still have 2 NiB Voodoo5 5500's (well... one was opened, then closed... when I realized it wouldn't fit in a modern motherboard).

-Suncho

- Gold Leader

- DBB Ace

- Posts: 247

- Joined: Tue Jan 17, 2006 6:39 pm

- Location: Guatamala, Tatooine, Yavin IV

- Contact:

Re:

yeaps that is rightSuncho wrote:After buying a Riva 128 instead of a Voodoo1 and a Riva TNT instead of a Voodoo2, I promised myself that I'd only buy 3dfx for the rest of my life because of how much the nVidia cards sucked. So instead of going TNT2, I went Voodoo3, and instead of going Geforce2, I went Voodoo5. I don't regret it for a second (except for the part where I promised myself 3dfx for life) and I used my Voodoo5's through 2002. I still have 2 NiB Voodoo5 5500's (well... one was opened, then closed... when I realized it wouldn't fit in a modern motherboard).

but after many hours of getting these ultra rare prototypes working in games that gave me the kick that 3dfx prototypes never seen on the marking playing the games that they were made for that was my whole point, and then I get off topic bullcrap that " my GeForce 6800 is better and so on " I don't accept that childish behaviour no one would If some would behave like a 12 year old in thier topic, that is why I got pissed, like anybody else would for thier sakes.

but yeaps uses a full size ATX/WTX case and the 5500 AGP will fit easily

I'm still looking for the Add-Tronics W8600 WTX Server tower for the Voodoo5 6000 system

No, that's not the answer. =PObi-Wan Kenobi wrote:hahah lol well a Voodoo5 6000 is some what like 5 years older than the 6800GT and it's like you really need that 290 fps, the human eye can't even see that so what's the point? --> Answer pointless for the user and great marketing for nVidia yippie!!

It's not just about fps or how many fps one can see. It's about eliminating system lag, and that's where cards like the 6800 GT and the 7900 GTX deliver. =P Your Voodoo 5 6000 is just a bottleneck, unlike the 6800 GT.

And again, it's not about comparing the 6800 GT to the Voodoo 5, because, like you said, that's unfair. It's about saying that Krom and I don't have such a bottleneck in our systems. ^_~

MD, I think you are taking what Obi-Wan is saying too personally. =P To be honest, being called "nVidiot" and such makes me feel a bit competitive, but not insulted. =)

You know what, Obi-Wan, you're probably right: 3Dfx was probably a better company, but honestly who cares about image quality? The image quality of nVidia cards is good enough for me (except maybe the FX series =P) and plus I can get 1000 fps in D3 as opposed to the Voodoo5's 250 or whatever; so what if ATi has the performance crown right now? The 7900 GTX will probably sm0ke that thing when Anand tests it today, =P and nVidia produces an all around better card, so that's why I prefer nVidia over ATi, and 3Dfx is dead, =P so the only option is nVidia.

By the way, I didn't mean to get defensive or anything or go off topic, when I posted my comment that I'll get 1000 fps while you play with your Voodoo 5, I was just being silly. ^_~

Zero, Behemoth, FOIL, Terminal, Neo. The greatest pilots in the universe. :P

- Gold Leader

- DBB Ace

- Posts: 247

- Joined: Tue Jan 17, 2006 6:39 pm

- Location: Guatamala, Tatooine, Yavin IV

- Contact:

Re:

Hm I can help you with that a friend of mine from Romania called Zeckensack made a very good Glide wrapper which enables 3dfx Glide on GeForce4 and ATi Radeon 8500 and upSuncho wrote:I have a 6800 as well, and I dream of the day when nVidia starts supporting Glide. =)

http://www.zeckensack.de/glide/archive/ ... er084c.exe

it's really near the real thing,but a Voodoo5 card gives the best performance in glides games with FSAA ofcourse , this Glide program is the clossest you can get to 3dfx Glide it's self.

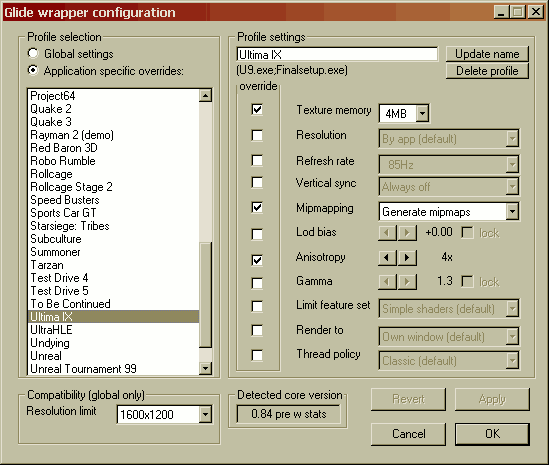

here a pic of how the Glide Mod looks like:

with this program you have the abillity to choose or make your own profiles also!

here the requirements for this great program

and here the official site

http://www.zeckensack.de/glide/index.html

Now Glide is possible on your modern cards as well

Re:

Yes, but keep in mind it doesn't matter too much in a FPS. It's mainly good for making nice stills ..Obi-Wan Kenobi wrote:NV FSAA Sux big time to be honest

3dfx and ATi still have the best FSAA.

Another interesting bit of info is that even tho the 6800 IQ went up quite a lot w/ the 70 & 80 drivers, my old GF Ti4600 still beats the living crap out of a 6800 (IQ wise in D3). That's probably the reason for the 4600 disapearing rather quickly after the GF 5xxx hit the shelves ..

- Krom

- DBB Database Master

- Posts: 16058

- Joined: Sun Nov 29, 1998 3:01 am

- Location: Camping the energy center. BTW, did you know you can have up to 100 characters in this location box?

- Contact:

Re:

Did you even look at that picture? That jpeg is so over-compressed its not even amusing as an image quality comparison shot. Also the geforce 6 series and up use rotated grid FSAA so the quality is virtually identical if not better because the grid pattern is better.

Just for fun, let's toss in a PNG image of GF6 AA shot. This image was only converted directly from tga to png using "save for the web" in adobe imageready CS2. That means there is NO gamma correction applied and that is what makes images from screen shots so dark not the FSAA, I could apply some gamma correction but that would wash out the colors.

So where are the details that get owned? Are you going to run away forever and just laugh at your own jokes saying your FSAA is better by showing horribly compressed jpegs from years ago? Remember, it isn't 2001 anymore, the FSAA patterns in Geforce 6 cards are nothing like the patterns used on the Geforce 2.

Or perhaps, I remember reading a blog from the outrage team talking about working in all three APIs for rendering, Glide, D3D and OpenGL, and their goal was to have all three APIs render as close to the same image as possible. Could it be that there are no magical missing details that get owned on geforce cards and saying something general like "the details get owned" is all you could say because you don't have anything real or specific to point out?

-

MD-2389

- Defender of the Night

- Posts: 13477

- Joined: Thu Nov 05, 1998 12:01 pm

- Location: Olathe, KS

- Contact:

Thats the one thing I liked about the 4600. It blew the crap out of the majority of the FX line in sheer performance alone. If it had the same pixel shaders thats in use today that was in use back then, along with the same OpenGL support then I would have no doubt that it would be a stellar performer.

I'll have to give that glide wrapper a try, just for kicks. Last time I used one was back when I ran an N64 emulator on a Geforce3 Ti200. (Still have that card btw)

(Still have that card btw)

I'd also be interested in a true comparison of the 6800 and the 6000, just for kicks. Lets shoot for 1280x960 with 4x FSAA (My 6800GS supports 8x FSAA Supersample, but I'd like to see a comparison on \"even ground\".) and whatever anisotropic filter level your voodoo card can support with tri-lineral mip-mapping. Just to keep the artifact arguement out of the equation, lets use the default targa format that D3 uses. Lets also stick to 16-bit rendering since thats something all three API's support in D3 without issues.

I'll have to give that glide wrapper a try, just for kicks. Last time I used one was back when I ran an N64 emulator on a Geforce3 Ti200.

I'd also be interested in a true comparison of the 6800 and the 6000, just for kicks. Lets shoot for 1280x960 with 4x FSAA (My 6800GS supports 8x FSAA Supersample, but I'd like to see a comparison on \"even ground\".) and whatever anisotropic filter level your voodoo card can support with tri-lineral mip-mapping. Just to keep the artifact arguement out of the equation, lets use the default targa format that D3 uses. Lets also stick to 16-bit rendering since thats something all three API's support in D3 without issues.

- Gold Leader

- DBB Ace

- Posts: 247

- Joined: Tue Jan 17, 2006 6:39 pm

- Location: Guatamala, Tatooine, Yavin IV

- Contact:

Re:

hmm nice story what's next? a party at the neighbour's house? I mean all you can say is that you do not agree with me right ?Krom wrote:Did you even look at that picture? That jpeg is so over-compressed its not even amusing as an image quality comparison shot. Also the geforce 6 series and up use rotated grid FSAA so the quality is virtually identical if not better because the grid pattern is better.

Just for fun, let's toss in a PNG image of GF6 AA shot. This image was only converted directly from tga to png using "save for the web" in adobe imageready CS2. That means there is NO gamma correction applied and that is what makes images from screen shots so dark not the FSAA, I could apply some gamma correction but that would wash out the colors.

If a Ball comes your way it does not mean you have to catch it

But You must do What you feel Is best ofcourse that is something which is not my concern and also something I can't decide for you, I sense your hatred please let go of that hatred and come back to your senses.

- Gold Leader

- DBB Ace

- Posts: 247

- Joined: Tue Jan 17, 2006 6:39 pm

- Location: Guatamala, Tatooine, Yavin IV

- Contact:

Re:

MkayFloyd wrote:mine is bigger

@ Grendal

I still got a boxed Leadtek WinFast A250 ULTRA TD aka Leadtek GeForce4 Ti4600 100% complete in Box here and yes I totally agree. with your point there it was the best nVidia ever made.

- Krom

- DBB Database Master

- Posts: 16058

- Joined: Sun Nov 29, 1998 3:01 am

- Location: Camping the energy center. BTW, did you know you can have up to 100 characters in this location box?

- Contact:

Did you even read the second half of that post? I assume since you didn't actually answer anything in the entire post that it is because you are unable to come up with an answer to anything in that post. Please prove me wrong and actually come up with a real response. I will consider any points you have to make. If you insist on talking about the ball and whose court it is in, that last response is equivalent to having the ball bounce off of your face.